It's time for an OpenOffice.org benchmark rematch. Go-oo has been proudly boasting it is "Better, Faster, Freer," but last time when we tested OpenOffice.org 2.4, Go-oo came in fourth place out of four. Since then Go-oo developers addressed some problems, and now we're checking a new version 3.0 and adding benchmarks on Windows.

Editions

The following editions were tested. The abbreviated code identifies each edition in the charts.

Ubu Go-oo: Universal Linux build of Go-oo 3.0.0 on Ubuntu.

Ubu PPA: OpenOffice.org 3.0.1 on Ubuntu. Ubuntu 8.10 normally comes with OpenOffice.org 2.4, so this is the version from the popular OpenOffice.org PPA. Like the OpenOffice.org edition that ships with Ubuntu, PPA is based on Go-oo.

Ubu StarOffice: StarOffice 9 on Ubuntu with Java JRE 1.6.

Ubu Vanilla: Vanilla OpenOffice.org 3.0.1 on Ubuntu. Vanilla means it is the unmodified version available from www.openoffice.org.

Win Vanilla: Vanilla OpenOffice.org 3.0.1 on Windows without a Java JRE.

Win Portable: Portable OpenOffice.org 3.0.1 on Windows modifies the vanilla edition with executable compression, by removing some files, and by making other changes.

Win StarOffice: StarOffice 9 on Windows with Java JRE 1.6.

Environment

The test environment is a modest machine from several years ago:

- Operating system: Microsoft Windows XP SP3

- Operating system: Ubuntu 8.10 (Intrepid Ibex) with Linux kernel 2.6.27

- CPU: AMD Athlon XP 3000+ (32-bit, single core)

- RAM: 1.5 GB DDR 333 (PC 2700)

- Hard disk drive: SAMSUNG HD501LJ 500 GB SATA

- Video: Via VT8378 S3 Unichrome IGP at 1024x768

Benchmark process

Like previous benchmarks, this OpenOffice.org benchmark uses automation to precisely measure the duration of a series of common operations: starting the application, opening a document, scrolling through from top to bottom, saving the document, and finally closing both the document and application. Automation is much more precise than a human with a stopwatch, and with the small durations in these tests, automation is necessary. Each of the five tests are repeated for 10 iterations. Before each set of 10 iterations, the system reboots. The purpose of rebooting is to measure the difference in cold start performance where information is not yet cached into fast memory. A reboot marks a pass, and there are 10 passes. That means for each edition of OpenOffice.org, there are 100 iterations. Multiplying by 5 tests and by 7 editions of OpenOffice.org yields 3500 total measurements collected for this article.

Some editions don't have a quickstarter, so I disabled it for all editions as in previous articles.

Two steps back

Speed doesn't tell the whole value of an application. People buy cars based on price, reliability, appearance, fuel economy, storage capacity, and so on. Likewise an office suite is measured by its compatibility, reliability, and productivity. This article focuses on one aspect—speed—but gathering these observations revealed a sea of regressions related to the automation necessary to gather precise performance measurements. All the OpenOffice.org 3.0 editions tested suffered from more bugs than OpenOffice.org 2.4, and StarOffice and Vanilla OpenOffice.org worked the best while OxygenOffice and Go-OOo contained the most difficult bugs. None of these bugs would affect a typical user, but it made benchmarking using automation difficult and, in some cases, impossible.

Let's quickly count these bugs

- Since before OpenOffice.org 3.0, automation sessions crash harmlessly on exist (issue 59026). No big deal.

- All Windows editions required a workaround for the error AttributeError: loadComponentFromURL (issue 90701).

- Go-OOo and OxygenOffice have a workaround for 90701, but it's worse on Windows.

- Using automation causes OxygenOffice and Go-OOo to crash on Fedora and Ubuntu (bug 479346). The bug is so severe it was not possible to test Go-OOo 3.0.1, OxygenOffice 3.0.0, or OxygenOffice 3.0.1. On Linux only Go-OOo 3.0.0 worked.

- A certain use of automation causes OxygenOffice 3.0 and Go-OOo 3.0 to crash on Windows (bug 487074) making them impractical to test on Windows.

- On Windows a regression prevented bootstrapping OOo (issue 99754). The workaround wasted a few hours.

-

Python

on Portable OpenOffice.org would immediately quit on one of

my machines (the real test machine). The Windows Event Viewer logged theses

errors

andSideBySide

Dependent Assembly Microsoft.VC90.CRT could not be found and Last Error was The referenced assembly is not installed on your system.

until I installed the Microsoft Visual C++ runtime.Generate Activation Context failed for C:\OpenOfficePortable\App\openoffice\Basis\program\python-core-2.3.4\bin\python.exe.

Reference error message: The operation completed successfully. - Portable OpenOffice.org froze four times during its 100 iterations.

While these regressions don't affect end-users directly, OOo should remember Steve Ballmer's mantra "Developers, developers, developers." It is the developers who build a rich ecosystem which makes OpenOffice.org attractive— especially to business users. Let's entice developers with an easy platform.

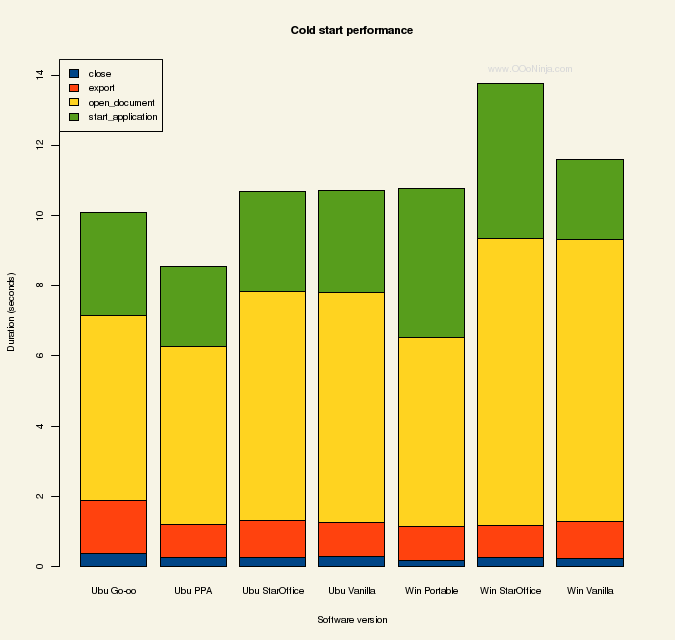

Results

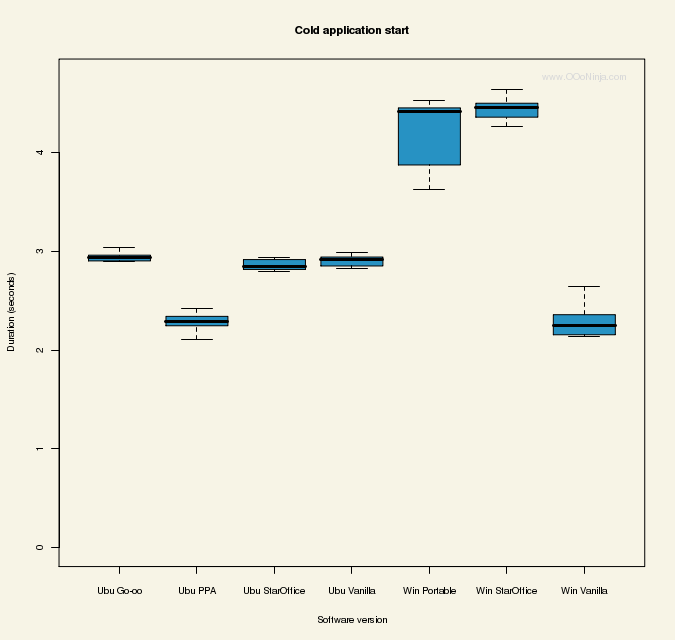

Cold start performance is the most critical factor to the perception of fast performance. While the Go-oo homepage brags about its fast startup, the results aren't a resounding win for Go-oo. Because of Go-oo bugs, it could not be tested on Windows, and on Linux it's a mixed bag for Go-oo where it takes first and last place. Clearly on Linux the PPA edition is the winner at 2.28 seconds, but it's the only one of the four Linux editions that can take advantage of system libraries already loaded by other programs such as GNOME. The three other editions (which ship with their own copies of system libraries to be compatible with different Linux distributions) are surprisingly indistinguishable considering the many performance factors such as features, patches, compiler version, and compiler options.

On Windows, I expected Portable OpenOffice.org to take the lead because of its use of compression, which minimizes disk I/O. (Disk I/O severely degrades cold start performance.) However, Vanilla OpenOffice on Windows won at 2.83 seconds. Vanilla's advantage over StarOffice may be a difference of features or that only StarOffice was tested with a Java JRE (which it installs by default).

These charts are boxplots (drawn using R) showing the median and variance. Lower numbers are better.

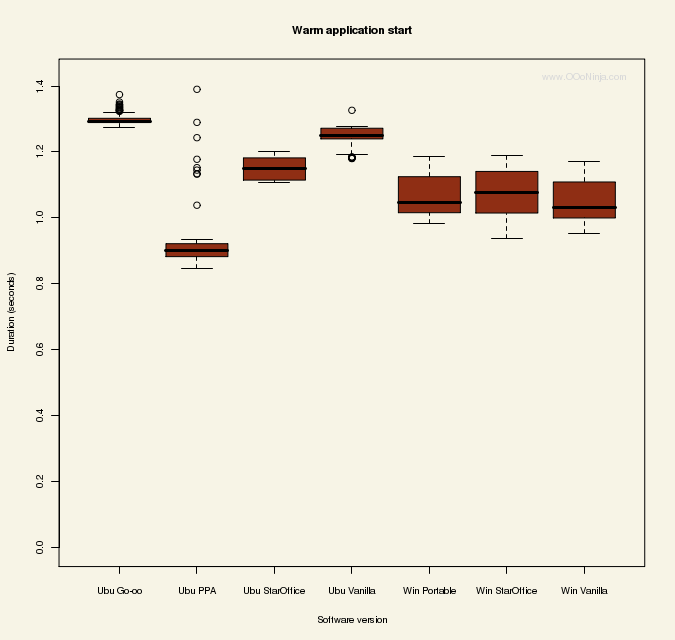

Another important performance metric is warm start performance, which means starting OpenOffice.org a second time in a row while the disk information is cached in RAM. Because of the cache warm starts are always faster than cold starts. The dramatic difference between cold starts and warm starts shows the vital role of minimizing disk I/O. A quickstarter is intended to make all starts seem like warm starts, but quickstarters have their own drawbacks.

Like in cold starts, Linux PPA takes the lead in warm starts at 0.93 seconds. You can see by the outliers (indicated by dots) that Ubuntu PPA is unique: usually the second and all subsequent iterations have the same performance, but for Ubuntu PPA the second iteration scores halfway between the cold and warm. The three Windows editions are similarly grouped, and again, Go-oo takes last place.

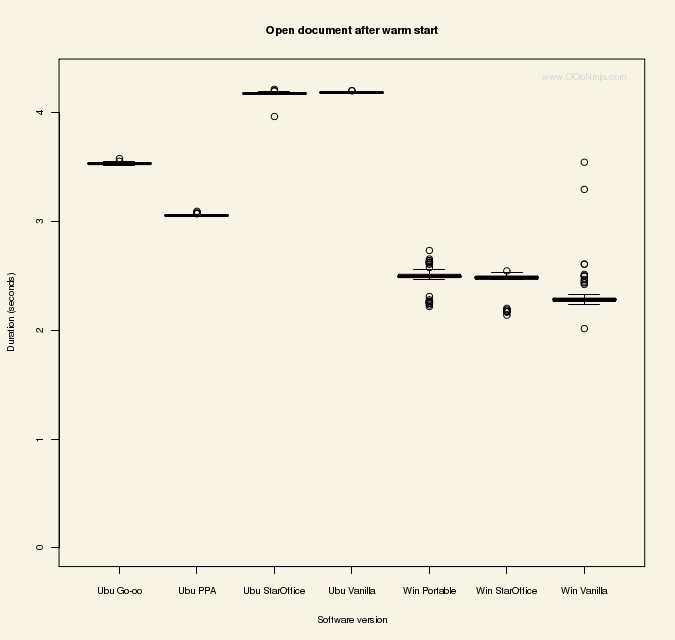

The next step in the benchmark is opening reference document ODF_text_reference_v3.odt. When opening the document after a cold start notice how similarly Vanilla and StarOffice perform on each platform. Likewise, Go-oo and PPA perform similarly—probably because system libraries wouldn't give an advantage in this operation. Finally Portable has a 33% advantage over Vanilla—probably because of executable compression. StarOffice and Vanilla take last place with PPA again taking first place.

Don't put all your money on Ubuntu yet: things look completely different when opening a document after a warm start. Now Windows takes a big lead over Ubuntu, and Vanilla Windows shows a dramatic 44% edge over Vanilla Ubuntu. Portable OpenOffice.org's executable compression weighs it down only a fraction in this race where CPU speed is paramount. Go-oo's patches (which are also in PPA) help but not enough to crush Redmond. It's hard to say with certainty, but the credit could be due to efficiency in Microsoft Visual C++ 9 (used to compile OpenOffice.org on Windows) over the open source GCC (used to compile OpenOffice.org on Linux).

The scrolling test simulates someone pressing the down arrow key to scroll from top to bottom. This test is so synthetic it won't be included in the final tally. Still, you can see Microsoft has the upper hand, which I attribute to better video drivers. Also, the difference between cold and warm starts illustrates how OpenOffice.org dynamically loads code into memory as necessary to render objects on the screen.

If you don't like waiting to save a text document, don't use the Go-oo Universal Linux build. Otherwise, all editions scored similarly with StarOffice taking its first victory by a slight margin.

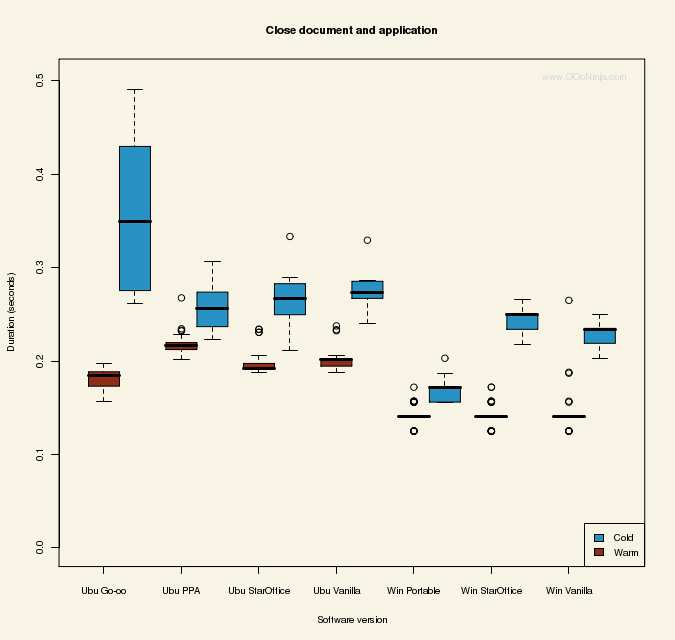

Now there is nothing left to do except close the document (which is already saved) and exit OpenOffice.org. Even here you can't shake the difference between cold and warm starts: OpenOffice.org is clearly accessing new data on the disk even while exiting. On Windows the warm start times are beautifully identical, and Portable's success over Vanilla in the cold start category implies OpenOffice.org is opening an internal DLL (perhaps to save settings to the OpenOffice.org registry). On Ubuntu, Go-oo takes first and last place after warm and cold starts (respectively). All these scores fit within my personal threshold for patience.

Counting all the races now, the champion of cold starts shouldn't surprise you if you read the last article. Last time the Fedora edition of OpenOffice.org licked its competitors on Fedora, and now Ubuntu PPA OpenOffice.org does the same on Ubuntu. Clearly there is a home field advantage on Linux: OpenOffice.org performs best when it avoids loading separate copies of libraries. As the Linux Standard Base (LSB) grows, maybe one day OpenOffice.org can avoid the library mess. Even so Intrepid Ibex squeezes out victory over Windows XP.

For people who use OpenOffice.org often, the warm start performance numbers carry more weight. Boosted by huge gains in the speed of opening a document, Windows XP whips the enduring Ibex.

Conclusion

Which OpenOffice.org edition is fastest? All OpenOffice.org editions and both operating systems performed well, and it's not possible to identify a single champion. Go-oo's tweaks often (but not always) gave it an advantage over Sun Microsystem's Vanilla edition, but OpenOffice.org PPA's system libraries gained the most substantial advantage.

OpenOffice.org 3.1 is just around the corner, and the rumor is the new performance improvements make is fast. I hope to see Go-oo and OxygenOffice fix the automation bugs, so they can be better represented in the next showdown. (I'd even more hope they would upstream all their patches despite the political drama, but that's another story.)

Subscribe to www.OOoNinja.com's news feed in the top right of the page. Upcoming articles will delve into practical steps you can take to improve performance while shedding light on some performance myths.

Related articles

- New Features in OpenOffice.org 3.0 March 2008

- The Fastest OpenOffice.org Edition September 2008

- Is OpenOffice.org Getting Faster? May 2008

- Benchmarking Microsoft Word 95 through 2007 July 2008

23 comments:

"performed well"? What? You mean it's okay for an enhanced version of notepad to take 8 seconds to open a freaking text document? to take 7 seconds just to scroll from the top to the bottom?

ALL versions of openoffice performed, and still perform, TERRIBLE, but out of all that shamefulness, PPA seems to be not the worst.

Enhanced version of notepad? Ha ha ha, you crack me up.

I the difference in Linux and Windows in the scrolling benchmark is due to differences in the video drivers.

"...to take 7 seconds just to scroll from the top to the bottom?"

I suspect you did not read the post very well. It was a simulation of someone clicking on the down arrow a bunch of times. A human couldn't even click the darn button fast enough to do that. Arguably that test was not very useful anyway. What if the Windows version moves more in a single click than the Linux version? We have no way of knowing...

In prior experience, I found that getting meaningful numbers for cold-boot startup time took many, many iterations. On my experiments, I ran an order of magnitude more iterations to get the jitter under control. Variances of over 2 seconds were observed in cold-boot startup time. Additionally, I found that a cool-down time of a few seconds decreased the variability of the startup times. That is, after the desktop boots, sleeping for a few seconds to let the system "cool down."

Scrolling or any other video operation in Linux is slow as hell due to the continued use of the antique "X Window System". Every other modern OS has made the GUI a part of the kernel for speed and efficiency, Linux has not. Though Apple uses a Unix core, they dumped the old Xorg in favor of their own, better designed system.

Yes, you desktop composition systems like "Compiz Fusion" can speed up moving windows that have already been constructed, but all other operations including window resizing remains slow because it still relies on X.

> Yonah said...

> Scrolling or any other video

> operation in Linux is slow as hell due

> to the continued use of the antique "X

> Window System". Every other modern OS

> has made the GUI a part of the kernel

> for speed and efficiency...

You mean the way Windows incorporated the GUI into the kernel, thus making even the simplest of applications capable of crashing the entire system??? Yeah, *real* great advancement there, pal.

Well, nobody want to say it...

OpenOffice is faster on Windows.

Videos drivers could be an explanation for the scroll. But what about the startup ?

Any idea ?

tulcod: The test document is about 15 pages, so 0.5 seconds per page is reasonable. Try it yourself on any word processor by pressing the down arrow. (The exact document is linked, so you can try the same document.) The key press in the test was simulated at the OpenOffice.org level using the OOo API (not the keyboard/hardware/operating system level), so I assume it's not affected by OS differences like a keyboard setting.

mikeleib: Minimizing noise is important. When I first started benchmarking OOo, I tried various cold start simulators (like flushing the cache) that yielded inconsistent results. Once I switched from simulations to real reboots, instantly my results showed low variation. All my data is shown in the box plots above, so you can see how consistent it is. When writing this article, I didn't repeat any tests not shown here (for example, if I thought the data was inconsistent). Yes, I also sleep a moment after the desktop starts to let it "cool" (which seems to be most important on Windows). I've started writing benchmark guidelines which may help you?

Yonah: I blame the open source drivers for my Via Unichrome IGP. It can't handle Compiz at all, and it struggles with Warzone 2100 (3D game). However, I am fairly confident the only test significantly affected by the video drivers is scrolling.

Did you try Ubuntu with preload installed?

Does the Windows environment have a virus scanner installed? If not this is not a realistic comparison.

Jonathan: I haven't yet tried preload but I will consider testing it as I continue this series on performance.

Anonymous: No, I didn't use a virus scanner. While it's true most Windows users have one, each scanner has its own performance profile, so there is not a fair choice to pick any individual scanner. As much as possible, I used default settings everywhere, and I performed no optimizations to the OS or application.

Startup is likely to be faster on Windows because with XP and even more with vista Microsoft started cheating in the startup benchmark game. The OS remembers which programs are accessed during boot in the %SystemRoot%\Prefetch\Layout.ini file. It then automatically defragments them into the best position for fast access and so fast boot and startup times.

It's a rather sneaky trick as many of those files won't be needed again until the next time the system reboots.

PS: Be honest, an enhanced version of WORDPAD not notepad.

Yeah those cheats, how dare they improve startup performance of the apps you use all the time.

Nope, you're reading it wrong Anon. Not programs you use all the time, but programs you use first. The aim of the layout.ini is to reduce the time Windows takes in a boot benchmark.

If you're lucky and only use one or two programs (that probably have to have preloaders) it will probably help you.

Probably. But otherwise it speeds up boot at the expense of programs you use all the time.

Who said that "if a virus scanner were in place, it would not be a realistic comparison"? Actually, it would have been more realistic, since it is recommended forcefully to have anti-virus installed on Windows.

I was forced to install intrusion detection on my work machine, and it has a noticeable impact on compiling (for example).

Chris

Good stuff. And it's obvious that Windows needs an AV, just ask anybody.

Andrew, the performance improvements in 3.1 are rather occasional fixes. The "real stuff" will happen in 3.2 and later.

Thanks for your measurements, it looks like a lot of work you have done here.

Just a remark: when we applaud the PPA version for being faster because it profits from libraries loaded by the system, we shouldn't call the same thing in MSOffice "cheating". Both are just using their home field advantage.

Sorry, I don't want to appear as "anonymous". The last comment was from me, Mathias Bauer.

"noscript" obviously disabled the field for names. Now I fixed it.

You tested Go-Oo on Linux, but why not on Windows?

It would only have made sense as far as comparison goes for this test. I just have always been curious about the performance difference of Go-Oo between Windows and Linux because I believe Novell primarily codes on Linux.

Anonymous (April 6): I could not test Go-oo on Windows because of a critical bug. The article explains this in more detail.

When I benchmarked OpenOffice.org/StarOffice years ago I measured differences between Linux and Windows. There were differences between both operating systems regarding resolution of application symbols on operating system layer. On the other hand video drivers and video driver versions can make a big difference if you do a benchmark run. Cold start and warm start measures should be compared as well because you will measure the difference of cached symbols vs. real application start. Regards, Joost

"You mean the way Windows incorporated the GUI into the kernel, thus making even the simplest of applications capable of crashing the entire system??? Yeah, *real* great advancement there, pal."

I have 3 computers running Windows in my home that are used on a daily basis, and the last time one of them crashed was 3 years ago, and it wasn't even Windows' fault since it was caused by a hardware failure.

Linturd must be a POS seeing how you need to post made up BS about other OSes to defend it.

Post a Comment